Questions 1-5 are based on the following

passage.

This passage is excerpted from John P. A. Ioannidis, “Scientific Research Needs an Overhaul,” ©2014 by Scientific American.

Earlier this year a series of papers in The Lancet reported

that 85 percent of the $265 billion spent each year on medical

research is wasted. This is not because of fraud, although it is

true that retractions are on the rise. Instead, it is because too

5 often absolutely nothing happens after initial results of a

study are published. No follow-up investigations ensue to

replicate or expand on a discovery. No one uses the findings

to build new technologies.

The problem is not just what happens after publication—

10 scientists often have trouble choosing the right questions and

properly designing studies to answer them. Too many

neuroscience studies test too few subjects to arrive at firm

conclusions. Researchers publish reports on hundreds of

treatments for diseases that work in animal models but not in

15 humans. Drug companies find themselves unable to

reproduce promising drug targets published by the best

academic institutions. The growing recognition that

something has gone awry in the laboratory has led to calls

for, as one might guess, more research on research (aka,

20 meta-research)—attempts to find protocols that ensure that

peer-reviewed studies are, in fact, valid.

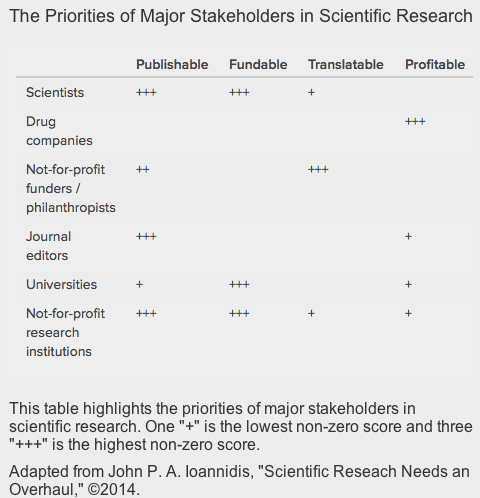

It will take a concerted effort by scientists and other

stakeholders to fix this problem. We need to identify and

correct system-level flaws that too often lead us astray. This

25 is exactly the goal of a new center at Stanford University (the

Meta-Research Innovation Center at Stanford), which will

seek to study research practices and how these can be

optimized. It will examine the best means of designing

research protocols and agendas to ensure that the results are

30 not dead ends but rather that they pave a path forward.

The center will do so by exploring what are the best ways

to make scientific investigation more reliable and efficient.

For example, there is a lot of interest on collaborative team

science, study registration, stronger study designs and

35 statistical tools, and better peer review, along with making

scientific data, analyses and protocols widely available so

that others can replicate experiments, thereby fostering trust

in the conclusions of those studies. Reproducing other

scientists’ analyses or replicating their results has too often in

40 the past been looked down on with a kind of “me-too”

derision that would waste resources—but often they may

help avoid false leads that would have been even more

wasteful.